Services on Demand

Journal

Article

Indicators

-

Cited by SciELO

Cited by SciELO -

Access statistics

Access statistics

Related links

-

Cited by Google

Cited by Google -

Similars in

SciELO

Similars in

SciELO -

Similars in Google

Similars in Google

Share

Ingeniería e Investigación

Print version ISSN 0120-5609

Ing. Investig. vol.31 no.1 Bogotá Jan./Apr. 2011

Analysing collision detection in a virtual environment for haptic applications in surgery

María Luisa Pinto Salamanca 1, José María Sabater Navarro 2, Jorge Sofrony Esmeral3

1 Electronic Engineering. M.Sc. in Engineering - Industrial Automation, Universidad Nacional de Colombia. Professor, School Electromechanical Engineering, Universidad Pedagógica y Tecnológica de Colombia Uptc. marialuisa.pinto@uptc.edu.co.

2 PhD. in Engineering by the Universidad Miguel Hernández. M.Sc. in Industrial Engineer by the ETSII - Universidad Politecnica Valencia, Spain. Professor Coordinator, Virtual Reality and Robotics Lab. vr2, Universidad Miguel Hernández of Elche, Spain. j.sabater@umh.es.

3 PhD. in Control Systems , University Of Leicester. M.Sc., Technology And Medicine. Professor, Department of Mechanical and Mechatronics Engineering, Universidad Nacional de Colombia. jsofronye@bt.unal.edu.co.

ABSTRACT

This paper presents an analysis of two commerciallyavailable haptic interfaces that can be used in surgical training and medical simulation. Integrating development kits with open source software liaries like OpenGL and VCollide led to proposing a solution to problems like loss of tactile ability and detecting collisions between objects in a virtual environment.

Haptic applications were based on results regarding capturing, processing and analysing images for building models for interaction with rigid bodies of medical interest. The application included tools for marking points and paths on a virtual surfaces and force reflection algorithms for simulating interactions with surface/volumetric 3D models. Immersion characteristics and the effect of virtual surgical instruments were analysed.

Keywords: haptics, collision detection, medical simulator.

Received: December 7th 2009. Accepted: January 24th 2011

Introduction

Haptics is a research area concentrating on studying the interactions of the sense of touch with virtual objects. Haptic interfaces are bi-directional devices transmitting the sensation of forces and/or contact to an operator at the same time as such operator sends commands to a remote area (i.e. they render advanced interactions allowing procedures requiring tactile sensations through force feedback to be simulated (Gomez, 2005).

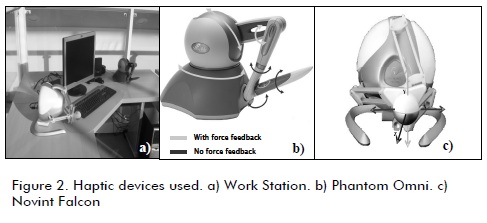

According to (Mendez, 2008), haptic interfaces like Phantom Omni and Novint Falcon have specific electro-mechanical characteristics making them an appropriate choice for simulating procedures requiring some special manual ability.

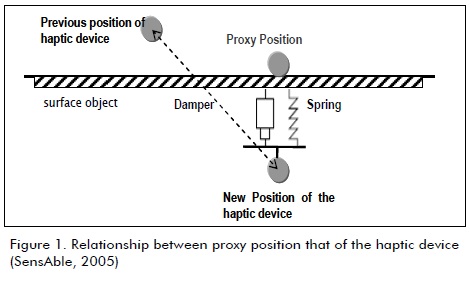

The final mechanism effecter and its translation to a virtual space are done by mapping a single point having coordinate frame (x, y, z) in the axis labelled surface contact point (SCP), god-object, haptic interface point (HIP) (Popescu, 1999), Proxy (Phantom Omni) or hdTool (Novint Falcon). All geometric transformations are made regarding this point, or haptic cursor and aid virtual tool representation, collision detection and functionality force feedback (see Figure 1). If no collisions occur between the cursor and virtual objects then the desired position and the real position of the proxy coincide (after all geometric transformations have been applied). On the other hand, if collisions occur, then the physical final effecter can "enter" the object but the proxy has to remain on the surface of such virtual object. The force which will be fed-back to the haptic device is now calculated using such position discrepancy and may use different force interaction models to quantify this interaction (i.e. the massspring-damper used throughout this presentation). Alternatively, force-feedback signal intensity can be computed as a proportional value of the overall collision area; thus, if more area comes into contact, then signal intensity is higher and vice versa.

This work proposed developing a set of software liaries to help simulating the interaction between a virtual solid object and a proxy (also represented in the visual scene as a geometrical solid). Collision detection algorithms had to be used so that rigid object contact and superficial triangular mesh models could be detected. VCollide is a publically-available algorithm for detectind *.obj files and easy integration with OpenGL and HDAPI. The same software architecture was used in this work for integrating Novint Falcon and its SDK HDAL (Novint, 2008), advancing this low-cost haptic device's functionalities. Developments were based on a Windows XP 32-bit platform, Microsoft Visual Studio.Net 2005, AMD Athlo 64 X2 Dual Core Processor TK57 1.90 GHz, 1.75 GB RAM y and an NVIDIA GeForce 7000M 256 MB graphical unit.

Integrating haptic devices

Software and hardware

Phantom Omni

SensAble Technologies Inc. (http://www.sensable.com/haptic-phantom-omni.htm) makes the Phantom Omni haptic device (Figure 2b) which has a 6 -DOF joystick-type serial configuration and 3.3N rated force feedback on the (x,y,z) axis. OpenHaptics SDK is provided by the manufacturer and is part of the Open- Haptics Toolkit v.2.0 which allows different software to be developed involving the human-machine interaction of a device and an operator. The SDK comes complete with a set of development tools and liaries such as HDAPI (Haptic Device API), HLAPI (Haptic Liary API) utilities, Phantom Device drivers (PDD) and source code examples; OpenHaptics Academic Edition OHAE v2.0 liaries were used in this paper.

Novint Falcon

Novint Falcon (Figure 2c) manufactured by Novint Technologies Inc is a force-feedback haptic device having parallel cinematic chain configuration. The Novint HDAL SDK contains the drivers and liaries needed for developing haptic environments; this is done from a haptic device abstraction layer (HDAL). HDAL SDK v.2.1.3 was used for all the developments presented in this paper.

OpenGL API and VCollide

OpenGL API is a programming interface used to create 3D virtual objects and interact with them in real time. It was developed by Sillicon Graphics based on their IRIS GL liary and is considered to be the most used portable, open-source API in the industry for 2D and 3D applications (Hearn, 2006). It allows new models to be produced, or interaction with models created using other graphical suites exporting files in WaveFront *.obj extensión. Interaction with objects is possible through gl, glu and glut liaries (Bradford, 2004). Programing can be done in C++, C#, Java or Visual Basic., OpenGL API gl, glu, glut v3.7.6 for Windows was used in the work presented here.

VCollide was selected as our collision detection algorithm as it offered the possibility of working with triangular mesh 3D objects, very complete collision log reports and has very high levels of performance (measured in time to detect) compared to other open-source liaries (Caselli, 2002; Muñoz-Moreno, 2004). The liary was developed by Geometric Algorithms for Modeling, Motion, and Animation (GAMMA) (http://www.cs.unc.edu/~geom/) at the University of North Carolina. It is written in C++ and was optimized to work in highly congested environments with objects modelled as 3D triangular mesh surfaces. The experimental results were based on using VCollide v.201 compiled using Microsoft Visual Studio v.6.0 and v.8.0.

Integrating OpenGL and VCollide

As VCollide and OpenGL use C++ programming language, their integration within a high level application was simple. For more complex scenarios, their interaction using VCollide was done using WaveFront *.obj files which allowed easy interpretation using C++ routines. These files defined an object's geometry and other properties which could be visualized. Files with *.obj extension can be generated using any commercially (or publically) available 3D-modelling software package.

3D models were generated, imported and exported in the application using open-source Blender v.2.46 (www.blender.org) 3D modelling software and objects were exported in *.obj format to add them as a collision-able objects by VCollide. A class object was developed to interpret *.obj files for haptic purposes to organise information about vertices and surfaces, to allow scaling objects, to calculate the normal vector associated with each triangle in the mesh, to draw objects and add them as collisionable objects. VCollide log reports were used and only the colliding objects’ position was of interest.

Experiments and haptic interaction

HDAPI liaries provide direct control of Phantom Omni. The different functionalities and utilities allow configuring the device, sense joystick status (final effecter position, velocity and force) and generate forces by indicating its direction and intensity. The haptic cursor is represented as a virtual solid that takes into account the different geometric transformations that the object is subjected to, i.e. rotations and translations regarding the scenes coordinate frame.

These functionalities allowed easy integration of VCollide, OpenGL and OpenHaptics SDK HDAPI and rendered the force vector dependent on the collisions detected between objects.

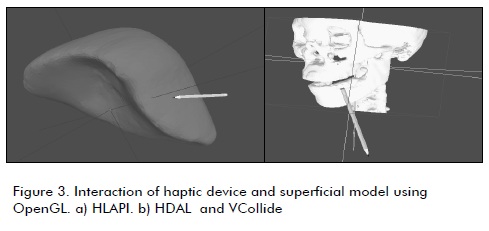

Various sized medical images having several vertices (Tibamoso, 2009) were inserted and performance was analysed for different object properties using HDAPI and HLAPI from Open Haptics, VCollide, HDAL and OpenGL. The "tool" or haptic cursor was represented as a pencil, a scalpel, a drill and a hammer (changing them by using an options menu). The superficial mesh model inserted a range of between 528 to 1,794,041 triangular polygons. Analysis concentrated on determining performance by:

1. Haptic tool with surface mesh model and HLAPI: interaction with Phantom Omni device (Figure 3);

2. Haptic tool with surface mesh model and HDAPI/ Vcollide: interaction with Phantom Omni device; and

3. Haptic tool with surface mesh model and HDAL/VCollide: interaction with Novint Falcon device.

Force reflecting algorithm using volumetric approximations

Rendering force-feedback is the main procedure by which the device can transmit tactile sensations to an operator. The force vector can be updated using different forms of interaction and has to take the proxy's dynamic geometrical transformations into account. Once the device has been integrated into the virtual scene, force vectors can be calculated and applied, depending on movement, time, position or a combination of these. Based on the information given by the collision detection algorithm (i.e. VCollide), a method was proposed that used HDAPI and HDAL liaries to render collided section geometry and volumedependent force vector intensity and heading (Sabater, 2009).

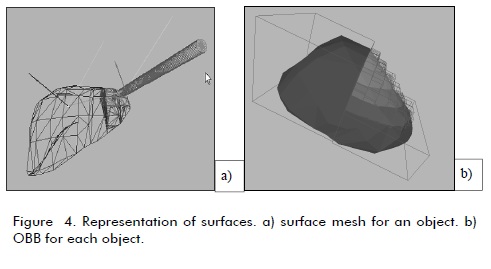

Once a collision had been detected, the VCollide log report and scene set-up provided information about the collided sections, as well as how many triangles had been overlaid and the volume associated with such superimposition on each collided object. The volume of the objects inserted in the scene was determined on the coordinates for the vertices constituting each triangular section (Figure 4). For simplicity, volume was calculated using a geometric primitive shape circumscribing the triangle. This primitive was chosen as a parallelepiped so that mathematical calculations became reduced to multiplication on a three-coordinate axis, namely (width, height, depth), in turn being calculated by the difference between each coordinate frame's maximum and minimum components. This led to applying an object boundingbox algorithm that accepted translational and rotational transformations of the i-th object vsi as follows:

1. Determining (xmin, xmax), (ymin, ymax), (zmin, zmax)

2. Calculating xv = xmax - xmin, yv = ymax - ymin, zv = zmax - zmin

3. Object transformation and "go to" step 1 )

It was proposed using vector, geometric and volumetric information to update the force feedback signal to simulate the interaction between virtual solids and haptic cursor. This information was retrieved via the VCollide log and useful data could be extracted in the form of the position for collided objects Tc1, their vertices vc1, normal vectors nc1 of section pc1 and corresponding volume Voc1. It should be pointed out the information received constituted data regarding collisions between the proxy and virtual (collision-able) objects. Rendering a force vector having magnitude proportional to collided objects' volume and whose direction was mean normal vector of the collisions was thus proposed.

Experimental results

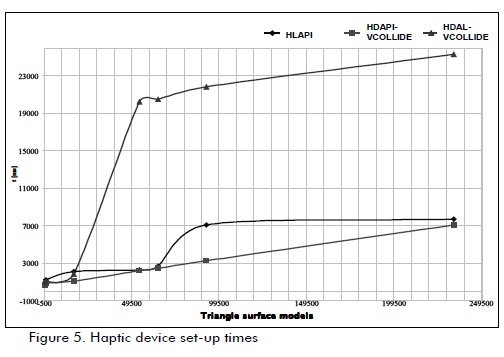

VCollide cf HLAPI/HDAL for collision detection

Figure 5 shows the set-up times for each device and a different number of triangular polygons. It can be observed that when inserting an object having 233,764 triangles, the Novint Falcon device (with HDAL and VCOLLIDE) user had to wait for about 25,342 ms before being able to use the application. The Phantom Omni device (with HLAPI and HDAPI-VCOLLIDE) had reduced set-up times (i.e. 7,719 ms stand-by time).

OpenHaptics SDK had better initialization times using HDAPI VCollide than with its original firmware. Nonetheless, force feedback using HLAPI (which is the standard manufacturer's firmware) had better results in terms of intensity and orientation when the dynamic cursor moved along a surface, allowing smoother, more continuous tactile sensations than when HDAPIVCollide was used. This was only the case when the proxy were represented as a single point; tactile sensation was acceptable if the proxy was a solid, but visual immersion became lost as the objects became superimposed on each other and it seemed as if the proxy was entering the solid.

Another aspect t needing to be considered was graphical rendering and its dependence on the number and type of polygons used to generate the virtual objects and their surface mesh representation. Visual variations were measured using the glPolygon- Mode function in OpenGL. As mentioned previously, haptic rendering was transparent if the solids were represented as a collection of points or lines. Table 1 shows the other comparison criteria.

Tactile exploration tools for surface mesh models

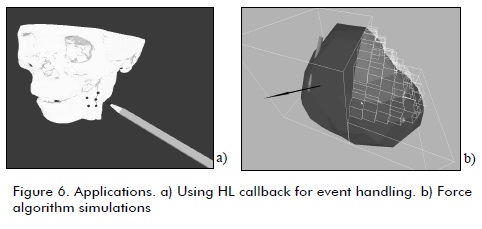

Using existing callback functions in HLAPI and HDAL, it was possible to manipulate the device through events of interest and tactile feedback. Some events of interest were collision detection between the proxy and objects in the virtual scenario and digital input available in the device. These events were used to implement tactile exploration tools marking points and trajectories on the virtual solid, placing special emphasis on objects of medical interest (Figure 6). An output report was added which mapped all marked point and trajectories.

Figure 6b presents the integration results including the use of OpenGL, VCollide and HDAPI. This image shows the proposed algorithm's mechanism; the arrow represents force vector direction and its intensity was proportional to contact volume. Although contact volume estimation was imprecise (in particular when dealing with non-convex geometries), it was possible to reduce such discrepancy at the expense of higher computational cost. The experimental results led to concluding that volumetric approximation, and thus the feeling of immersion, became more precise as an object become smaller; this enhanced force reflection properties and made the system appear "more real".

Conclusions

The advantages of using Phantom Omni and HLAPI (provided by the manufacturer) were noticeable in terms of force reflection and tactile sensation; the joystick had smooth dynamic transitions. Nonetheless, a considerable loss of visual immersion was present and the application's realism could become lost as a consequence. It was thought that the liary should compromise visual immersion to obtain high tactile immersion and only use a single point representation for the proxy. Consequently, such software architecture is undesirable for medical simulators. By contrast, using VColide requires a more complex architecture including algorithms for identifying surface models' triangles and vertices, transforming and identifying the proxy's position and rendering forces using volumetric contact information. This requires integrating HDAPI (for Phantom Omni devices) and HDAL (for Novint Falcon devices) with VCollide.

The graphical rendering algorithm was transparent when OpenGL was used, with either OpenHaptics or HDAL liaries. This was also true for synchronising haptic and graphic threads. Nonetheless, HLAPI applications had reduced performance when superficial models having more than 230,000 triangles were loaded, especially in graphical rendering. Scene updates (points of view, haptic cursor transformations and zoom) were automatic with HLAPI, but tactile feedback was only possible for full surface models (GL-FILL); in other words, tactile information when objects were visualized using vertices or lines composing a surface mesh model (GL-POINT, GL-LINE and GL-FILL). This was not the case when using HDAPI or HDAL.

Simulation focused on exploring functionalities within the context of medical training applications. Objects considered were thus organs or some type of surgical tool. Objects were assumed to be rigid (constant geometry) and atomic (constant topology); in other words objects could not be divided and their shape did not change upon contact. Simulations only considered rotational and translational transformations; no on-line scaling was performed. Some of the immediate advances that must be made to use this type of technology in a medical training scenario would be real-time elasticity simulation algorithms allowing 3D objects to be represented as being deformable, details modelling special surgical tools such as needles and suture thread, leading to an attractive balance between computational cost and immersion in specific situations.

References

Bradford, J., Using OpenGL & GLUT in Visual Studio .NET, 2003. http://csf11.acs.uwosh.edu/cs371/visualstudio/index.html, 2004. [ Links ]

Caselli, S., Mazolli, M., Reggiani, M., A experimental evaluation of colision detection packages for robot motion planning. Procedings of the IEEE/RSJ Intl., Conference of Intelligent Robots and Systems EPFL. Lausanne, Switzerland, 2002. [ Links ]

Gómez, J.M., Muñoz, V., Dominguez, F., Serón, J., Sistema experimental de Tele-Cirugía., Revista eSalud, Vol. 1, No 2, Abril-Junio, 2005. [ Links ]

Hearn, D., Baker, M., Gráficos por computadora con OpenGL., PEARSON Prentice Hall, Madrid. 3 Edition. 2006. [ Links ]

Méndez, L., Pinto, M., Sofrony, J., Definición y Selección de una Interfaz Háptica para Aplicaciones Preliminares de Asistencia Quirúrgica., Memorias del III Encuentro Nacional de Investigación en Posgrados - ENIP 2008, Universidad Nacional de Colombia, Mayo, 2008. [ Links ]

Novint Technologies, Inc., Haptic Device Abstraction Layer (HDAL)., Programmer's Guide HDAL SDK VERSION 2.1.3 Agosto, 2008. [ Links ]

Muñoz-Moreno, E., Rodríguez, S., Vilora, A., Lamata, P., Martín, M.A., Luis-García, R., Aja, S., Gómez, E., Alberola, C., Detección de colisiones. Un problema clave en la simulación quirúrgica., Informática y Salud (I+S) vol. 48, Octubre, 2004, pp. 23-35. [ Links ]

Popescu, V., Burdea, G., Bouzit, M. Virtual Reality Simulation Modeling for a Haptic Glove., Computer Animation, 1999. Proceedings. Geneva, Switzerland, 1999, pp.195. [ Links ]

Sabater, J., Pinto, M., Saltaren, R., Sofrony, J., Azorin, J.M., Perez, C., Badesa, J., Force reflecting algorithm in tumour resection simulation procedures., CARS 2009 - Proceedings of the 23rd International Congress and Exhibition, Berlin, Germany, June 23 - 27, 2009, pp. 138-140. [ Links ]

SensAble Technologies, Inc., OpenHaptics Toolkit v. 2.0., Programmer's Guide, 2005, pp. 5-3 5-4. [ Links ]

Tibamoso G., Romero, E., Segmentación y Reconstrucción Simultánea del Volumen del Hígado a partir de imágenes de Tomografía Axial Computarizada, Descripción de proyecto., Universidad Nacional de Colombia, http://www.bioingenium.unal.edu.co/pagpro.php?idp=3Dhigado&lang=es&linea=2, 2009. [ Links ]

text in

text in